AI analysis reads like a single confident whole — but it mixes verifiable facts and invented inferences in the same breath. One instruction forces the split before any conclusion is drawn. Now you know which parts to act on.

// On this page

For the first six months I used AI for stock analysis, I was reading the answers wrong. I was asking good questions and getting back fluent, plausible replies — a mixture of true things, half-true things, and things that sounded true because they were phrased confidently. I had no way to tell which was which without re-doing the work myself.

The fix turned out to be one paragraph in the prompt. Once I started using it, the quality of every subsequent conversation went up sharply. Not because the model got better — because I stopped asking it to do two jobs in one breath.

The change is a filter prompt that forces the model to separate observable facts from assumptions before it draws any conclusion. The prompt is below, with what it does, why it works, and how to use the output.

The filter prompt

Before asking for any conclusion, I now require the model to split its raw material into two explicit lists.

LIST A — Observable facts: things that are verifiable from published sources (filings, announcements, audited accounts, market data). Do not include anything that requires interpretation to establish.

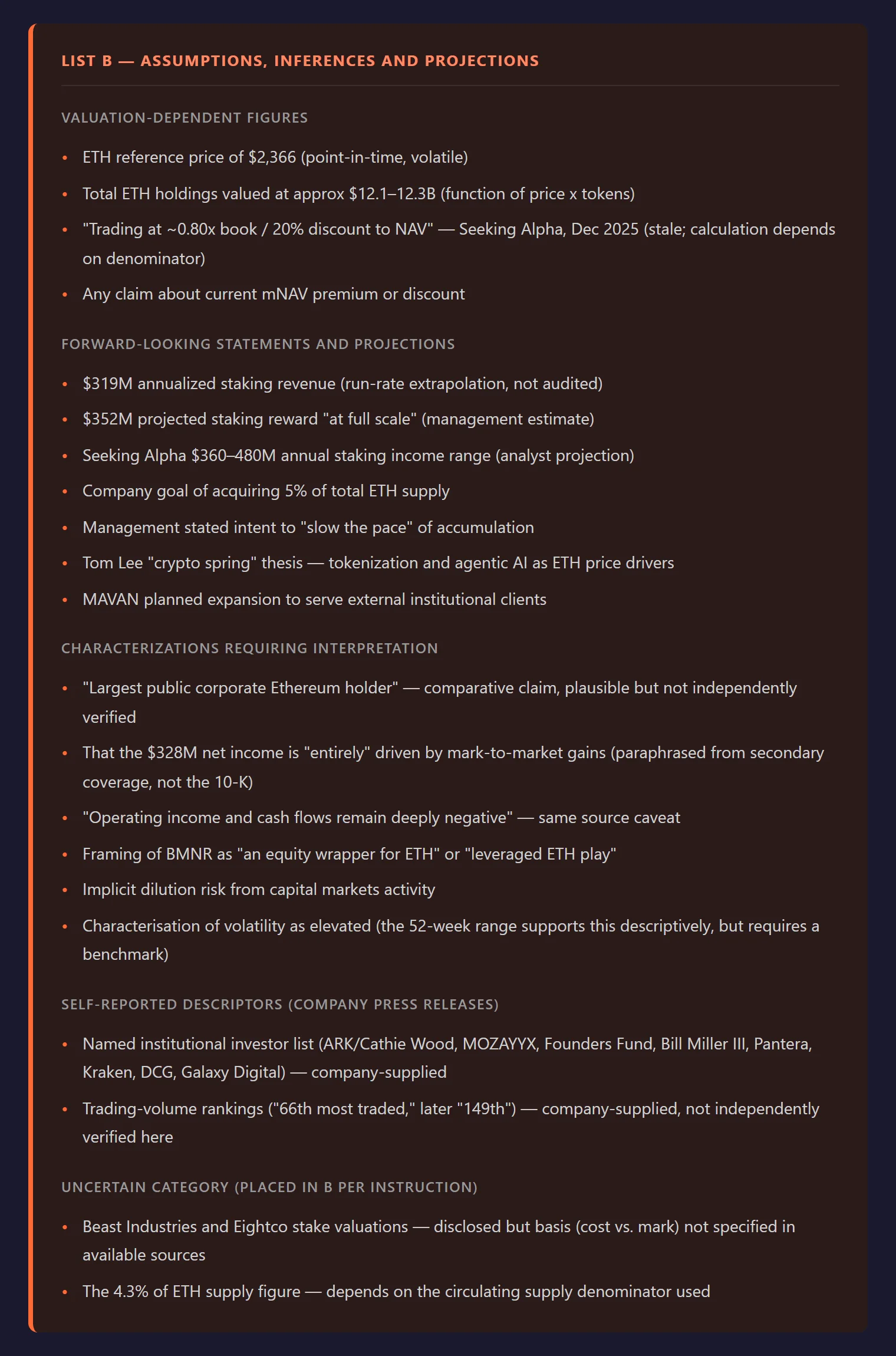

LIST B — Assumptions and inferences: things that appear in the investment case but depend on interpretations, management forecasts, analyst projections, or extrapolations from the observable data.

If you’re uncertain which list something belongs on, put it in List B and note the uncertainty. Do not skip this step. Do not move to analysis until both lists exist.

That’s it. That’s the change.

You’ll notice it does nothing fancy. It doesn’t invoke a persona, doesn’t ask for chain-of-thought, doesn’t request a particular format beyond two lists. It just refuses to let the model collapse fact and inference into a single soup.

The problem with a single answer

When you ask a language model “what should I think about this company?”, you get back something that reads like analysis. It has paragraphs. It cites figures. It draws conclusions. It sounds like the output of a human analyst.

What’s actually happened underneath is that the model has stitched together verifiable facts (from filings, news, market data) with plausible-sounding extrapolations (from patterns in its training data) into one unbroken answer. The seams are invisible. A revenue figure from the last annual report sits in the same sentence as a guess about next year’s margin trajectory, in the same tone, with the same confidence.

You read it, you nod, you absorb the conclusion. You have no idea which 20% of what you just read is load-bearing fact and which 80% is articulate speculation.

This is fine when you’re using AI for brainstorming. It’s not fine when you’re about to commit capital.

Why this works

Three reasons, in order of importance.

It makes the model show its working in a way you can audit. A single paragraph of analysis is impossible to fact-check without re-doing it. Two lists are trivial to fact-check. You scan List A, spot anything that doesn’t belong (a forecast, an opinion, a “should be”), and move it to List B yourself. Five minutes of skimming buys you the thing you actually wanted: a clear view of what is known versus what is being assumed.

It makes the assumptions visible to you. This is the bigger win. Most bad investment decisions are not made because someone got the facts wrong. They’re made because someone treated an assumption as a fact without realising. List B forces every assumption into the open. Once you can see them written down — the management’s recovery plan will deliver the margins they’re guiding to, the sector’s cyclical pressures have peaked, the new product line will scale — you can interrogate them one by one. You can ask which ones are load-bearing and which are decorative. You can ask what would have to be true for each one to fail.

It changes the tone of everything downstream. When you then ask “given List A and List B, what’s your view?”, the model’s answer is structurally different from what you’d have got without the split. It cites assumptions explicitly. It says things like “if the assumption in B3 holds” rather than asserting things flatly. It becomes much harder for the model to drift back into confident-sounding speculation, because it now has to reference its own scaffolding.

What to do with the lists

Don’t just read them. The lists are a working surface, not a deliverable.

Four things, in this sequence — the order matters:

-

Audit List A. Move anything that’s actually an inference into List B. Models will smuggle assumptions into List A under phrasing like “the company is well-positioned to…” or “demand has clearly shifted toward…”. These are not facts. They are framings that use facts. Move them.

-

Rank List B by load-bearing weight. Not all assumptions are equal. Some are background scenery that doesn’t really affect the case. Others are the case. A prompt that gets the model to do this work for you: “For each item in List B, mark it as LOAD-BEARING (the conclusion depends on this) or SCENERY (the conclusion survives if this is wrong). Be strict — most items should be scenery.” The two or three that come back load-bearing are the ones you actually need to think about.

-

Ask the model to attack the load-bearing ones. A new prompt: “For assumption B[n], give me the strongest case that this is wrong. Cite specific evidence.” This is where the real work happens. You’re no longer using the model to validate your view; you’re using it as an adversary against the specific points your view depends on.

-

Write down what you’d have to see to change your mind. This is for you, not the model. For each load-bearing assumption, write a one-line invalidation condition. “If quarterly margins come in below 14% next reporting cycle, B2 is dead and the case is dead with it.” Now you have a checklist for the next data point, instead of waiting to feel differently.

A worked example

I ran this on BMNR — a company I hold that treats Ethereum as its primary treasury asset.

The naive question was simple: “BMNR holds Ethereum as its primary treasury asset. Should I be adding to my position?”

The response was fluent and thorough. It cited the 52-week range, named the annualised staking revenue figure, described BMNR as “essentially a leveraged ETH wrapper with a staking yield kicker” (Claude.ai, 14 May 2026), and then mentioned — positively — that Seeking Alpha had noted a roughly 20% discount to NAV in late 2025. That last point was the one I was closest to acting on. Discount to NAV is how you size a position in an asset-holding company. At 20% below what it owns, the stock looks cheap.

Then I ran the filter prompt. The same discount figure reappeared in List B:

“Trading at ~0.8x book / 20% discount to NAV — Stale (Seeking Alpha, Dec 2025); calculation depends on denominator. Any claim about current mNAV premium or discount.”

December 2025 was five months earlier. The stock had moved sharply in both directions since then. The discount figure I was treating as a current valuation signal was nearly half a year old — and flagged as methodology-dependent on top of it.

That is the assumption I hadn’t noticed I was making: that a number from a secondary source was current. It appeared in the analysis as a data point, so it read as a fact. The filter moved it to List B in one pass, and I went to look for a more recent mNAV figure before doing anything else.

What this doesn’t fix

This is not a magic prompt. It does not turn a language model into an analyst.

The model can still get items in List A wrong. It can hallucinate a number from a filing, misattribute a figure, or simply invent something that sounds like it came from a published source. The split makes these errors easier to spot, but you still have to spot them. List A is a starting point for verification, not a verified output.

The model can still produce a List B that misses the most important assumption. It will catch the obvious ones (margin trajectory, demand assumptions, multiple expansion). It tends to miss structural ones (the assumption that the regulatory environment stays roughly the same; the assumption that the company’s accounting choices don’t shift; the assumption that the management team stays). You have to add those yourself.

The split also doesn’t help if you’re asking the wrong question in the first place. Garbage question, two carefully-separated lists of garbage. The discipline only kicks in once you’ve already framed the problem properly.

The deeper point

The reason this small change has such a large effect isn’t really about prompting. It’s about what you’re using AI for.

If you’re using it as an oracle — tell me what to think — no prompt structure will save you, because the failure mode is in the framing, not the wording. You’ll get an answer, you’ll find it persuasive because it’s articulate, and you won’t notice the parts that were quietly invented.

If you’re using it as a clerk — help me see what’s actually here — then the List A / List B split is the cleanest way to get the work done. You’re not asking it to think for you. You’re asking it to lay out the raw material so that you can think.

That’s the Prompt Stack idea in a single instruction. Force the structure that the model would otherwise paper over. Read what comes back as material to be audited, not as conclusions to be accepted. Do the actual reasoning yourself, with the lists as a working surface.

The rest of the Prompt Stack — role, filter, risk, verdict — is just this same idea applied at every stage. If you only ever adopt one piece of it, adopt this one.

It’s the cheapest discipline I’ve found for keeping AI useful instead of confidently wrong.

Verdict

What it fixes

Invisible assumptions presented as facts. Once List B exists, you can interrogate the specific points your view actually rests on.

What it doesn't fix

Wrong facts in List A, missing assumptions the model didn't catch, or a badly framed question to begin with.

Confidence

High — it's Stage 2 of the Prompt Stack, and the one I'd keep if I had to drop the other three.

Ben documents AI experiments against his own investment portfolio — real money, human analysis, sceptical use. About Ben →